Copilot for

Instructional Designers

Most instructional designers spend more time on admin than actual design. Microsoft Copilot can handle the repetitive 60% so you can focus on the creative 40% that actually matters.

You'll walk away with

Requires Microsoft 365 Copilot licence

The ID's Copilot Advantage

Where AI fits in your design process

Research shows 60% of ID time goes to content gathering, formatting, and admin. Only 40% goes to the actual design decisions - learning architecture, interaction design, assessment strategy. Copilot targets the 60%.

Where Copilot fits in ADDIE

Analysis - Synthesise needs data, interview transcripts, survey results

Design - Draft objectives, outline structure, map assessments

Development - Generate content drafts, assessment items, scenarios

Implementation - Create facilitator guides, comms, rollout plans

Evaluation - Summarise feedback, identify patterns, recommend improvements

Where Copilot does NOT replace you

Pedagogical decisions. Learner experience design. Emotional intelligence in scenario writing. Stakeholder relationship management. Visual design judgement. The ID is the architect. Copilot is the drafting assistant.

The quality control mindset

Every Copilot output is a first draft. Your expertise is in knowing what good looks like and editing accordingly. Speed without quality is just faster failure.

Setting up your ID workspace

Create a Copilot Notebook per project. Add your design documents, style guides, brand voice docs, and previous exemplars as references. This becomes your grounded context for every prompt in the course.

Check your understanding

An instructional designer uses Copilot to generate a course outline. What should they do next?

Correct.

Every Copilot output is a first draft. Your expertise as an ID is in knowing what good looks like - reviewing for pedagogical soundness, source accuracy, and learner experience quality before the content moves forward.

SME Content Extraction

From brain dump to structured content

You know the drill. Your SME sends a 47-page procedure manual, three email threads, a recorded Teams call, and a sticky note that says "also mention the new form." Turning this into a coherent course outline takes days. Copilot does the heavy lifting in minutes.

The extraction workflow

Add all SME materials to a Copilot Notebook

Procedure docs, email threads, meeting transcripts, policy documents - everything goes in.

Set the Notebook instructions

"You are helping an instructional designer extract training content from these source materials. Organise information by topic, flag contradictions between sources, and identify gaps."

Run the extraction prompts

Use the prompts below to systematically pull structured content from the chaos.

Ready-to-use prompts

Summarise the key procedures described across all sources. Group by task, not by document.

Identify the top 10 things a new employee needs to know from these materials, ranked by frequency of mention.

Flag any contradictions between [Document A] and [Document B]. List each contradiction with page references.

What topics are mentioned but not explained in enough detail for a learner? List the gaps I need to follow up with the SME about.

The follow-up question technique

After the initial extraction, ask: "What questions would a new learner have after reading this summary?" Copilot generates the questions your SME hasn't anticipated - and those become your FAQ section or knowledge check items.

Check your understanding

Your SME sends you a 40-page procedure manual and says "make a course from this." What's the most effective first Copilot prompt?

Correct.

Extraction before creation. You need to understand the content structure, identify issues, and find gaps before designing the course. Jumping straight to course creation skips the analysis that prevents rework later.

Learning Objectives That Actually Work

Bloom's-aligned, measurable, useful

Without guidance, Copilot defaults to vague objectives like "Understand the importance of safety." That's not measurable, not aligned to Bloom's, and not useful for assessment design. The fix is a structured prompt framework.

The Bloom's prompt framework

"Based on [source material], generate [number] learning objectives at [Bloom's level] using action verbs from [Bloom's level]. Each objective must include: the action verb, the specific content, and the condition or context."

Prompts by Bloom's level

Generate 5 knowledge-level objectives that require learners to recall or explain key facts from [topic]. Use verbs: identify, describe, explain, list, define.

Generate 5 application-level objectives where learners must apply procedures or analyse scenarios. Use verbs: apply, demonstrate, calculate, compare, differentiate.

Generate 3 higher-order objectives where learners must evaluate options or create solutions. Use verbs: justify, critique, design, propose, recommend.

The alignment check

After generating objectives, ask Copilot: "For each objective, suggest one assessment method that would accurately measure whether a learner has achieved it." This instantly validates whether your objectives are measurable.

Based on [regulation/policy document], generate learning objectives that cover every compliance requirement mentioned. Flag which requirements are critical (must-know) vs supporting (nice-to-know).

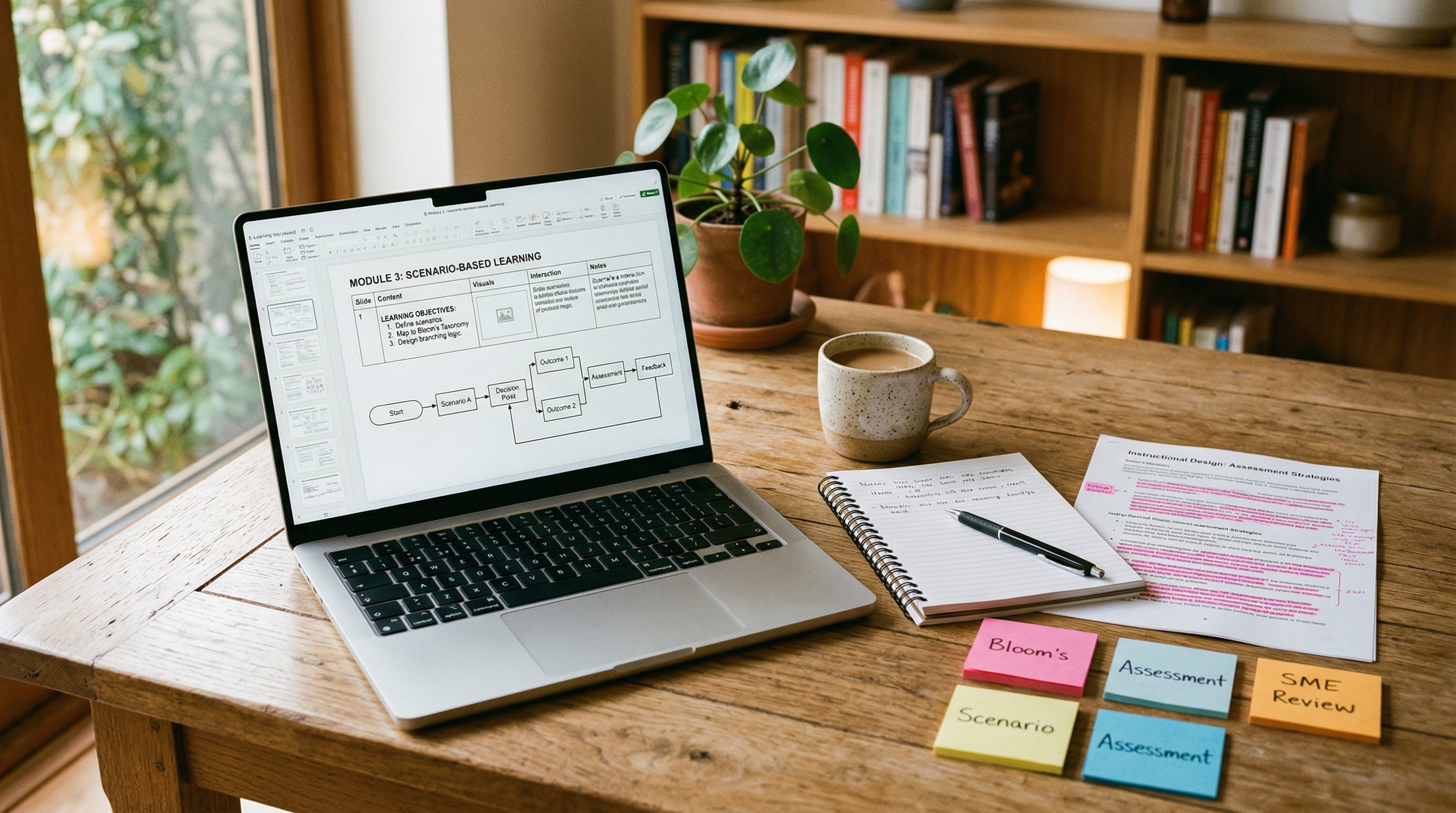

Outlines & Storyboards

From objectives to course architecture

Once you have validated learning objectives, Copilot can draft the course architecture. The key is giving it enough structure in your prompt to produce something genuinely useful - not a generic outline you'll throw away.

From objectives to structure

Based on these [X] learning objectives, create a course outline with logical module grouping, suggested sequence, and estimated duration per module.

The storyboard prompt

Create a storyboard for Module [X] with the following columns: Screen number, Screen title, On-screen text (max 50 words), Visual description, Interaction type, Narration script, Developer notes. Base content on [source documents].

Interaction suggestions

For each screen in this storyboard, suggest an appropriate interaction type from this list: click-to-reveal, drag-and-drop, multiple choice, scenario branch, hotspot, timeline, process steps. Justify each choice based on the learning objective it supports.

Chunking strategy

Ask Copilot to break content into chunks of no more than 3 key points per screen, with each chunk taking approximately 2 minutes to complete. This aligns with cognitive load research and keeps learners engaged.

Review-ready output

Format the storyboard in a table that stakeholders can comment on. Add a "Review question" column: "For each screen, add a question for the SME reviewer to confirm accuracy." This saves a round of review.

Assessment Items at Scale

Varied, grounded, ready to deploy

Writing good quiz questions takes longer than writing the content they assess. Most IDs copy-paste from the source material and turn statements into questions. Copilot can do this systematically and with variety - if you tell it exactly what you need.

Multi-format generation

Based on [learning objective], generate assessment items in the following formats: (a) 2 multiple choice questions with 4 options each, including plausible distractors, (b) 1 scenario-based question where the learner applies knowledge to a realistic situation, (c) 1 true/false question targeting a common misconception, (d) 1 matching exercise. For each item, cite the source material that validates the correct answer.

Distractor quality control

For each multiple choice question, ensure distractors are: plausible but clearly incorrect, similar in length and structure to the correct answer, and based on common misconceptions or errors rather than obviously wrong statements.

Scenario-based assessment

Create a workplace scenario for [role] where the learner must [apply specific procedure]. Present 3 - 4 decision points, each with 3 options. For each decision, explain why the correct option is right and why each distractor represents a common mistake.

Assessment bank strategy

Generate 3x more items than you need. Cherry-pick the best. Use the surplus for pre/post tests, remediation paths, or recertification assessments. This is where Copilot's speed pays dividends - you're building an assessment library, not just a single quiz.

Check your understanding

Which prompt would generate the highest quality learning objectives?

Correct.

Specificity drives quality. Specifying the Bloom's level, the number of objectives, the action verb category, and the context (clinical scenarios) gives Copilot the constraints it needs to produce measurable, useful objectives.

Scenario Writing

Realistic branching that feels authentic

Good scenarios need emotional context, realistic dialogue, organisational politics, and consequences that feel authentic. Copilot gives you the skeleton. You add the soul.

The scenario brief prompt

Create a branching scenario for [role] dealing with [situation]. The learner must make 3 decisions. For each decision point, provide 3 options: one correct, one partially correct (common shortcut), and one incorrect (common mistake). Include realistic dialogue and describe the consequence of each choice.

Character and context generation

Create a cast of 3 characters for this scenario. Each character should have a name, role, personality trait, and communication style. One should be supportive, one should be resistant, and one should be a wildcard. Write dialogue that reflects these personalities.

Healthcare-specific scenarios

Set this scenario in a [healthcare setting]. The learner is a [role]. The situation involves [clinical/compliance topic]. Include realistic details: patient names (fictional), department references, time pressures, and documentation requirements that would apply in an Australian healthcare context.

The human editing pass

Copilot's scenarios read competently but generically. Your job: add the specific organisational culture, the political dynamics, the emotional weight. Change the names to feel real. Add the detail that makes a learner think "this has happened to me."

Review Cycles

Taming feedback chaos

You send a storyboard for review. Three SMEs send back conflicting feedback in different formats - track changes, email replies, and verbal comments in a meeting. Reconciling this takes as long as writing the storyboard. Copilot can consolidate the chaos.

Feedback consolidation

Consolidate all reviewer feedback. For each piece of feedback, list: who gave it, what they said, which screen/section it applies to, and whether it conflicts with feedback from another reviewer.

Conflict resolution draft

Identify all conflicting feedback between reviewers. For each conflict, summarise both positions and draft a neutral email to both reviewers asking for clarification, with a suggested resolution.

Change log generation

Based on [Version 1] and [Version 2] of this storyboard, create a change log showing: what changed, why (linked to reviewer feedback), and who requested it. Format as a table.

Sign-off email drafts

Draft an email to [Stakeholder] summarising the changes made based on their feedback, confirming what was incorporated and explaining why any suggestions were not included. Professional, warm tone.

Check your understanding

You've used Copilot to generate 20 multiple choice questions. Five of them have an obviously correct answer that's longer than the distractors. What should you do?

Correct.

This is a common AI output pattern - correct answers tend to be more detailed. The fix is editorial: equalise the length and specificity of all options. The other 15 questions may be fine. Quality control is the ID's value-add.

5 ID Workflows That Save Hours Every Week

Copy, paste, apply, reclaim your time

Every workflow below follows the same pattern: a real ID problem, the Copilot approach, and the time you get back. These are the exact workflows an experienced instructional designer uses daily.

Needs Analysis Synthesis

Problem: Interview transcripts, survey data, performance reports, and stakeholder emails. Synthesising a needs analysis takes 2 - 3 days.

Time saved: 2 - 3 days down to 2 - 3 hours.

Analyse all sources and identify: (1) Performance gaps mentioned by 2+ sources, (2) Root causes (knowledge vs skill vs motivation vs environment), (3) Topics ranked by urgency and frequency, (4) Recommended training interventions for each gap.

Rapid Content Outline from Policy Documents

Problem: You're given a 60-page policy and asked to build compliance training by end of week.

Time saved: Full day down to 1 hour.

Extract all must-know requirements from this policy. Group by topic. For each topic, draft 2 learning objectives and suggest one interaction type. Flag any section that requires SME clarification.

Facilitator Guide Generation

Problem: You've built the eLearning but the facilitator guide is always last and always rushed.

Time saved: 4 - 6 hours down to 1 hour.

Based on this course storyboard, generate a facilitator guide with: session overview, timing, talking points per slide, discussion questions, activity instructions, and common learner questions with suggested responses.

Learner Communication Templates

Problem: Pre-course emails, reminder nudges, completion certificates, manager briefings - all need writing.

Time saved: 2 hours down to 15 minutes.

Generate a communication pack for [course name]: (1) Pre-course email explaining what to expect, (2) Day-before reminder, (3) Completion congratulations with key takeaways summary, (4) Manager briefing on what their team learned and how to reinforce it.

Post-Training Evaluation Summary

Problem: 200 survey responses. Your stakeholder wants a summary by Monday.

Time saved: Half day down to 30 minutes.

Summarise evaluation results. Group by theme. Highlight: overall satisfaction, top 3 strengths, top 3 improvement areas, and specific quotes that illustrate each point. Recommend 3 actions for the next iteration.

Check your understanding

Copilot generates a branching scenario that's technically accurate but feels generic. What's the most effective improvement?

Correct.

Copilot produces competent skeletons. The ID adds authenticity - the specific details that make learners think "this could happen to me." That's the creative 40% that makes learning stick.

Your commitment

Which workflow will you try first this week?

Course complete

You now have the exact Copilot workflows an experienced ID uses daily. The difference between knowing and doing is one prompt.

What you've learned

Built by Nic Gallardo | nicgallardo.com | Updated March 2026